2.5 Attacks on ML models

Especially image classification models have shown to be susceptible to attacks which leads to wrong classifications. This could lead to

- Traffic sign misclassification

- targeted attack \(\implies\) force a certain prediction

- untargeted attack \(\implies\) force misclassification, but not a particular predcition

- Avoiding face detection

How a attack can be performed is described by Goodfellow et al. in (I. J. Goodfellow, Shlens, and Szegedy 2014)

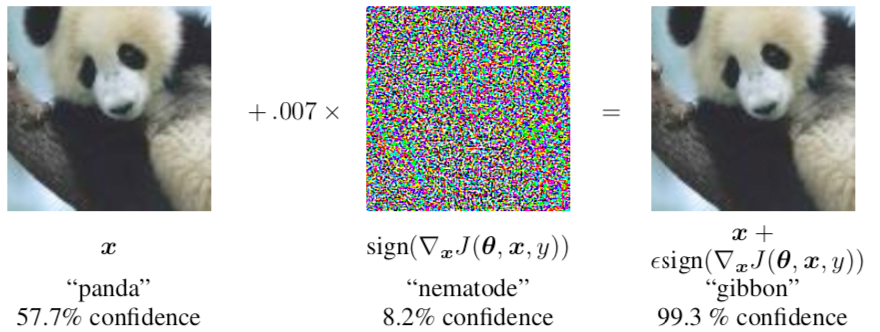

2.5.1 Adding noise to image leads to misclassification

Figure from Image Credit: Goodfellow et al. (I. J. Goodfellow, Shlens, and Szegedy 2014))

2.5.2 But what about attacks on human perception?

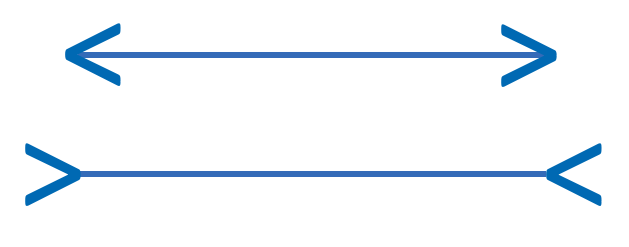

Which statement is correct?

Top line longer

- Bottom line longer

- Both are same length

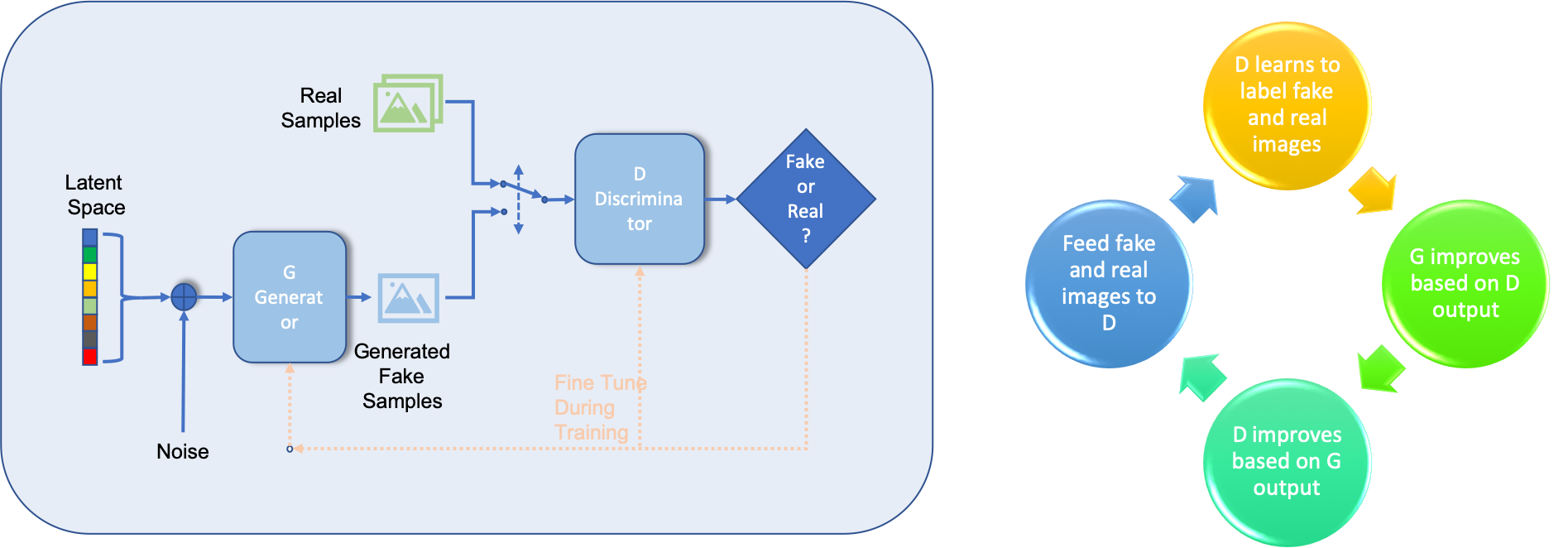

Is this a picture of a real person?

Look at the picture below, is it a real person or an animation?

Figure from https://commons.wikimedia.org/wiki/File:Woman_1.jpg (Image Credit: Owlsmcgee [Public domain] )

The image is create using a generative adversarial network (GAN), see below for the principle, for detailed description see https://medium.com/ai-society/gans-from-scratch-1-a-deep-introduction-with-code-in-pytorch-and-tensorflow-cb03cdcdba0f

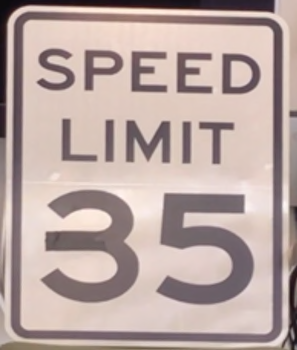

2.5.3 Model Hacking ADAS to Pave Safer Roads for Autonomous Vehicles

A McAfee Advanced Threat Research (ATR) hack which caused cars to drive 50 miles faster than the speed limit by tricking the camera using a piece of tape on the traffic sign. This simple attack tricked the Advanced Driver Assist Systems (ADAS) to drive the car at 85mph instead of 35mph. A detailed description of the hack can be found at https://www.mcafee.com/blogs/other-blogs/mcafee-labs/model-hacking-adas-to-pave-safer-roads-for-autonomous-vehicles/

Facts about ATR hack:

- McAfee Advanced Threat Research (ATR)

- MobilEye camera system

- utilized by over 40Mio vehicles (incl. Tesla Harware Pack 1)

- MobileEye reads a 35mph sign as 85mph sign

As a look forward Steve Povolny, Head of McAfee Advanced Threat Research wrote:

In order to drive success in this key industry and shift the perception that machine learning systems are secure, we need to accelerate discussions and awareness of the problems and steer the direction and development of next-generation technologies. Puns intended.

Steve Povolny, Head of McAfee Advanced Threat Research

2.5.3.1 Press coverage

Interesting was to see the coverage of the media

TESLA CARS TRICKED INTO AUTONOMOUSLY ACCELERATING 50 MPH USING SPEED SIGN ALTERED WITH SMALL PIECE OF TAPE Newsweek

Tesla in Autopilot mode tricked into speeding using terrifying hack The Telegraph